SharpContour: A Contour-based Boundary Refinement Approach for Efficient and Accurate Instance Segmentation

Chenming Zhu1 † Xuanye Zhang2 † Yanran Li4 Liangdong Qiu1,2 Kai Han5 Xiaoguang Han1,3 ‡

†The two authors contribute equally to this paper

‡Corresponding email: hanxiaoguang@cuhk.edu.cn

1SSE, The Chinese University of Hong Kong, Shenzhen

2Shenzhen Research Institute of Big Data

3FNii, The Chinese University of Hong Kong, Shenzhen

4Birmingham University

5The University of Hong Kong

CVPR2022

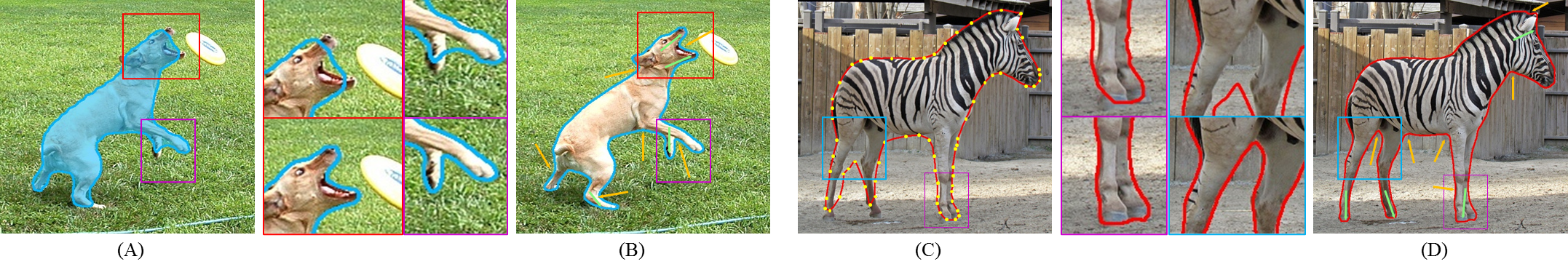

Fig. 1 Instance segmentation with SharpContour.

Left: A is the coarse mask predicted by Mask R-CNN and B is the refinement result of SharpContour.

Right: C is the coarse contour generated by DANCE and D is the refinement result of SharpContour.

In the corner areas, SharpContour yields significant improvements.

Abstract

Fig. 1 Instance segmentation with SharpContour.

Left: A is the coarse mask predicted by Mask R-CNN and B is the refinement result of SharpContour.

Right: C is the coarse contour generated by DANCE and D is the refinement result of SharpContour.

In the corner areas, SharpContour yields significant improvements.

Excellent performance has been achieved on instance segmentation but the quality on the boundary area remains unsatisfactory, which leads to a rising attention on boundary refinement. For practical use, an ideal post-processing refinement scheme are required to be accurate, generic and efficient. However, most of existing approaches propose pixel-wise refinement, which either introduce a massive computation cost or design specifically for different backbone models. Contour-based models are efficient and generic to be incorporated with any existing segmentation methods, but they often generate over-smoothed contour and tend to fail on corner areas. In this paper, we propose an efficient contour-based boundary refinement approach, named SharpContour, to tackle the segmentation of boundary area. We design a novel contour evolution process together with an Instance-aware Point Classifier. Our method deforms the contour iteratively by updating offsets in a discrete manner. Differing from existing contour evolution methods, SharpContour estimates each offset more independently so that it predicts much sharper and accurate contours. Notably, our method is generic to seamlessly work with diverse existing models with a small computational cost. Experiments show that SharpContour achieves competitive gains whilst preserving high efficiency

Video

Publication

Paper - ArXiv - abs (pdf) | Github - Coming soon

If you find our work useful, please consider citing it:

Coming soon

Method

SharpContour obtains the initial contour from coarse segmentation results and deforms the contour to achieve boundary refinement.

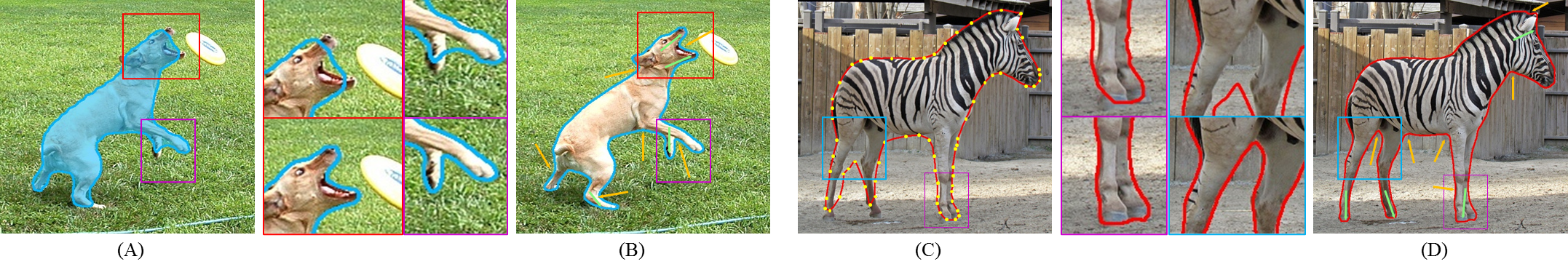

Fig. 2 shows the deformation process of SharContour:

1) SharpContour obtains the normal direction.

2) SharpContour predicts the inner/outer state of vertex to decide negative/positive normal direction of deformation.

3) SharpContour decides the step size.

4) SharpContour obtains the moving step number based upon the position of the flipping point.

5) SharpContour estimates the offsets and deforms the contour.

Fig. 2 Contour Evolution of SharpContour.

Result

Qualitative Results

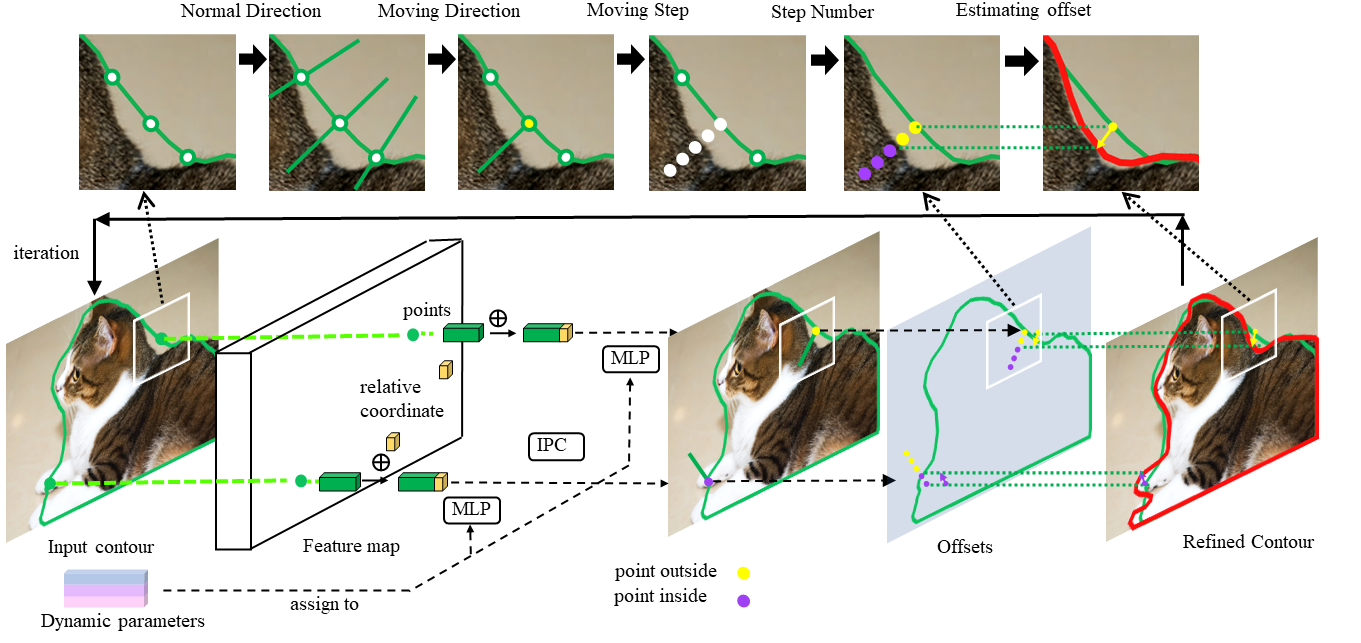

COCO Fig. 3 shows the results of COCO datasets. We use SharpContour to refine the segmentation results of different models and The top line is the results of DANCE while the bottom line is the results of Mask-RCNN. For each example, the left is the result before refinement while the right is our result. As is illustrated, SharpContour can refine the segmentation results near instance boundary.

Fig. 3 Qualitative Results on COCO datasets

CityScapes Fig. 4 shows the refinement results of SharpContour on the CityScapes datasets. For each example, the left is the result of Mask R-CNN while the right is our result. SharpContour can ameliorate the contour near instance boundary.

Fig. 4 Qualitative Results on CityScapes datasets

Quantitative Results

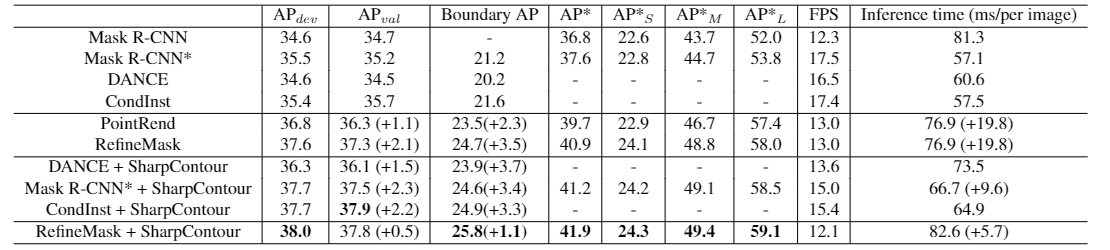

COCO Tab. 1 shows the quantitative results of SharpContour on the COCO datasets. APdev denotes the evaluation results on test−dev, and other columns denotes the evaluation results on val2017. “Mask R-CNN” is a original Mask R-CNN, and “Mask R-CNN*” is the improved version in Detectron2. All methods are trained with 1x schedule using R50-FPN backbone. The FPS is measured on a single Tesla V100 GPU. SharpContour brings significant AP enhancement for DANCE, Mask R-CNN and CondInst. Moreover, SharpContour can achieve competitive performance compared with other boundary refinement approaches with the highest efficiency.

Tab. 1 Comparisons on COCO val2017 and test-dev.

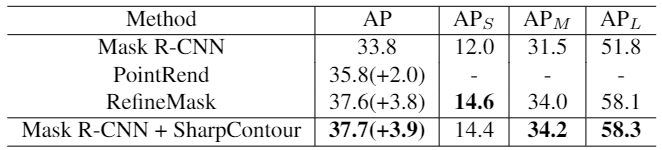

CityScapes Tab. 2 shows the quantitative results of SharpContour on the CityScapes datasets. The training setting for all models are same: trained on fine annotations for 64 epochs, using multi-scale training and ResNet-50 with FPN.

Tab. 2 Comparisons on CityScapes